Research Layers - Stanislaw's Substack

Stanislaw's Substack

Research Layers

Why Linear Chats Are Dead (and What's Replacing Them)

We’ve become accustomed to communicating with AI as an endless feed. You ask a question, get an answer, follow up... and then, after 10 minutes, you lose the thread of the conversation. Context blurs, and important details get lost in the scrolling

But our thinking is not linear. It branches

Insight:Why do we force our thoughts into a "message list" when research is really a tree? We've reinvented the approach to context. Meet Research Layers

Live Implementation: This concept is already active and open source. Check out implementation at KeaBase/kea-research.

The Evolution of UX: From Linear Chaos to Structured Layers of Research

How it works

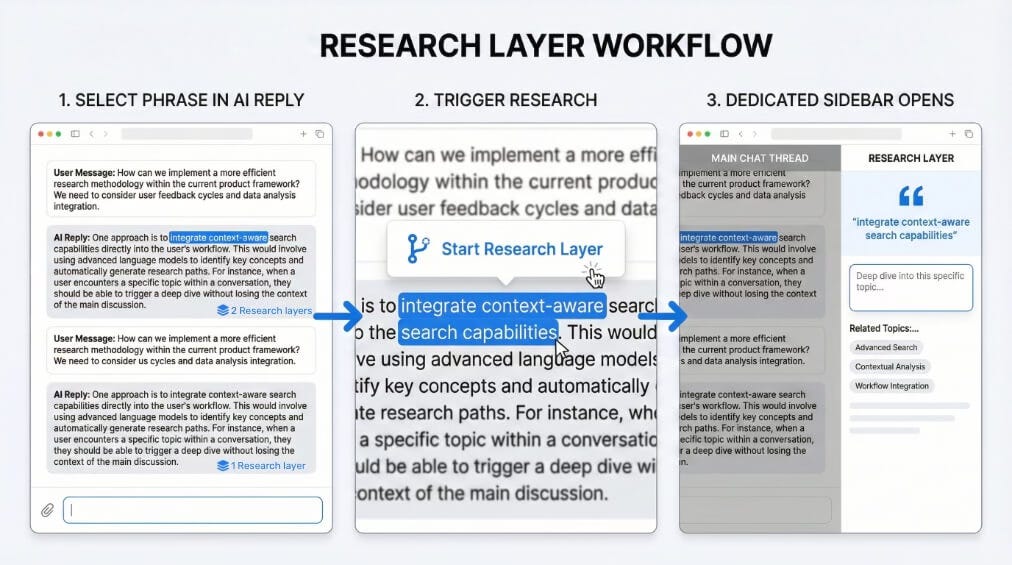

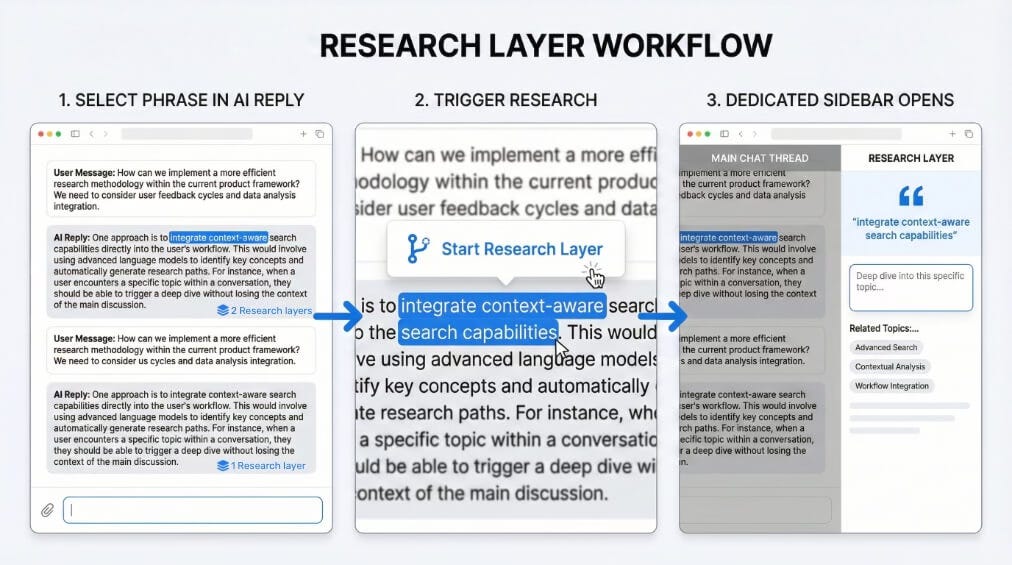

Instead of cluttering the main chat with follow-up questions, you create isolated layers. This works in three steps:

Partial Selection

You don’t need to quote the entire message. Use your cursor to highlight a specific phrase, term, or point in the model’s response that requires clarification

Branching

Click the “Start Research Layer” button. This is like taking a side road off the highway. You explore the area and then return to the main route

Split View

A sidebar opens. Here, the model sees only the highlighted point and your questions about it. You get a deep, focused response without noise, while the main chat remains clear

Why this changes the game

Accuracy and Context Saving

When you clarify a detail in a general chat, you feed the model the entire vast conversation history each time. This wastes tokens and dilutes the AI’s attention. In the Research Layer, you send only the gist. The model responds more accurately and doesn’t hallucinate from overload

Purity of Thought

Your main chat is the highway of ideas. Your layers are your laboratory. Don’t mix strategy with tactics

Marginal Notes

The final insight from a layer can be saved as a note linked to the original text. The knowledge is captured exactly where it’s needed

“I believe that interfaces of the future are more than just ‘chat.’ They are tools for structuring thoughts. Research Layers is a contribution to this standard.”

Open Concept by Stanislaw

Find me on: X(Twitter) LinkedIn Github

Thanks for reading Stanislaw's Substack! Subscribe for free to receive new posts and support my work.

No posts

Ready for more?