Request for Proposals: The Launch Sequence | IFP

Request for Proposals: The Launch Sequence

Summary

About The Launch Sequence

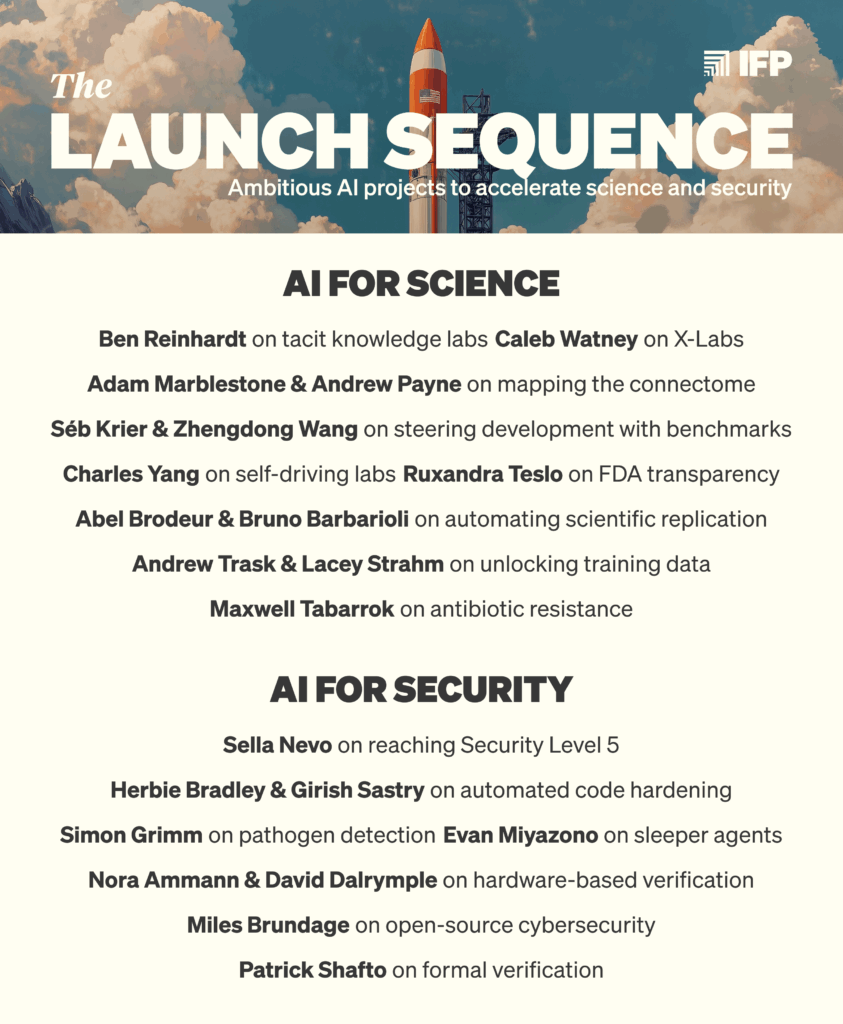

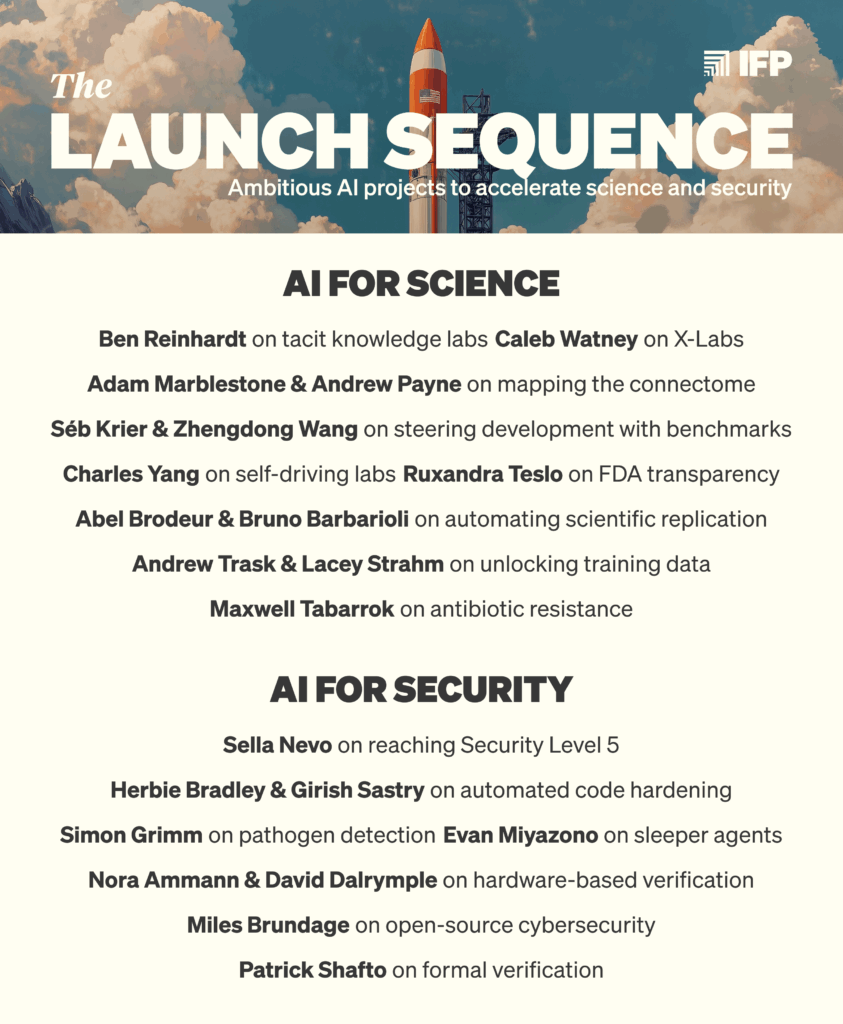

In 2025, IFP published The Launch Sequence, a set of proposals aimed at answering one question: what does America need to build, which will not be built fast enough by default, to prepare for a future with advanced AI?

As we explained in the foreword to the collection, Preparing for Launch, we need to solve two broad problems:

We invited some of the sharpest thinkers, engineers, and scientists thinking about these topics to write 16 concrete proposals to address these problems.

Since then, many of the proposals have gained real-world traction:5

What we are doing now

Major new funding is flowing into this space. The OpenAI Foundation committed $25 billion to AI resilience and curing diseases. The Chan-Zuckerberg Initiative is refocusing most of its philanthropic spending on AI projects to “cure all disease.” The White House launched the Genesis Mission, a “national effort to unleash a new age of AI‑accelerated innovation and discovery.” Philanthropies and government officials are asking us for more ideas about where this money should be directed.

This is a momentous opportunity, and how well these funds are spent will depend both on the quality of available ideas and on teams being ready to implement them.

That’s why The Launch Sequence is transitioning into a rolling effort to:

The output of The Launch Sequence will be a collection of thoroughly vetted, detailed project plans for philanthropists to fund or for policymakers to implement.7

Our advisors

We’re excited to announce our official Advisory Panel for the project, including:

What makes a good pitch

We are seeking initial short pitches (around 200–400 words) that address one of the three focus areas of this RFP: accelerating science, strengthening security, and adapting institutions. See “Ideas we are interested in” at the bottom of this post for full descriptions of these focus areas.

Your pitch should:

We are interested in projects that are particularly important to achieve in light of rapid advances in AI. This means that the capabilities of advanced AI — or the changes such capabilities will bring — should be a key part of either the problem or the solution.8 If you are unsure if a proposal is in-scope, we encourage you to submit it anyway.

A non-exhaustive list of the kinds of project ideas we’re excited about can be found at the bottom of this post. You can also see the project ideas we’ve already published on our website: ifp.org/launch.

Who should submit a pitch

We welcome pitches from two broad groups of contributors:

We are especially interested in pitches from people who would consider implementing their proposal themselves.10 This is not a requirement; we are also interested in hearing from strategists, researchers, and domain experts who can articulate what technologies or projects should exist, even if they are not the ones to build them. We also offer a $1,000 bounty for successful referrals — if you know someone who might be interested in being an author, please share this page with them and ask them to include your name in the application form. If their proposal is accepted, you’ll receive the bounty.

How it works

We expect authoring full Launch Sequence project plans to take 8–14 weeks from accepted pitch to published piece, involving several stages of writing, receiving feedback from IFP and input from external experts, and refining your full project plan.

IFP is a 501(c)(3) nonprofit organization, and will have no claim over any IP related to your idea, nor ownership of any resulting companies. We aim to accelerate authors and builders.

Submit your pitch

If you have an idea, we want to hear it. You can learn more and submit an idea via the form below.

Submit your pitch

This is a rolling RFP with no submission deadline, but we encourage you to submit a pitch early (within the next few weeks), as we will prioritize early submissions and start reviewing immediately.

Questions? Email launch.sequence@ifp.org

Ideas we are interested in

We are interested in rapidly building what we need to prepare for a world with advanced AI so that we can fully reap AI’s benefits while managing its new threats. Such an agenda will include a wide-ranging set of projects, including creating companies, tools, technologies, institutions, research streams, resources, and public policies.

We will only consider projects that address a market failure (or policy gap) and will therefore not be built by default, or not quickly enough. The three core categories where we expect these issues to surface are in accelerating science, strengthening security, and adapting key institutions.

The lists below are not meant to be exhaustive. Instead, they are meant to illustrate the kinds of projects that we would be excited to support. We encourage you to submit pitches for projects whether or not they are listed below.

Accelerating Science

What resources, technologies, or institutions will scientists need to unlock breakthroughs with AI and other emerging technologies, and which aren’t being created fast enough through market forces or traditional grant funding?

AI promises to transform how science is done. But leveraging AI advances for breakthroughs requires more than capable models. It requires infrastructure, institutions, and resources that no single lab or company has an incentive to build: shared datasets, standardized protocols, access to physical experimentation capacity, and new modes of peer review and validation. Traditional grant funding moves too slowly and often rewards the wrong things; private industry optimizes for what can become profitable, not what will most advance scientific progress. Without targeted investment and effort by the US government and philanthropies, scientific bottlenecks will limit AI-accelerated discovery even as the models improve. Below are non-exhaustive problem areas for which we are excited to receive submissions.

Health and biology. The highest ambition for AI may be to generate cures to inherited and infectious diseases. But even as AI companies pursue this goal, their efforts alone are unlikely to fully accomplish it: biology is immensely complex, wet lab work is messy and reliant on tacit knowledge, and treatments require extensive clinical trials and regulation before they can even start to be distributed to patients. And even if current technologies eventually scale to this lofty goal, delays, even on the order of years, will have massive life-altering costs for billions of people and avoidable suffering at scale. Moving faster, even to achieve the same outcome, can save millions of lives.

We are interested in proposals that offer ways to speed up biological research, decrease the time between a treatment’s discovery and its real-world availability, or create the policy entrepreneurship needed to massively improve healthspan around the world.

Novel infrastructure for the scientific process. AI tools are being developed to increase productivity in every industry, science included. However, while commercial interests are rapidly building AI tools for materials or drug discovery, efforts to improve the basic scientific process have received comparatively less attention. We are interested in “horizontal” infrastructure that improves the scientific process itself across fields. Projects to build better tooling for natural and physical sciences include:

Metascience. Scientists spend a great deal of time on the work that surrounds the research enterprise — developing systems to durably maintain and share data, writing and reviewing proposals, and interacting with their supporting and partner institutions. Each of these areas of work is already being transformed by AI, but conventional grants and institutions are slow to catch up. Efforts to reimagine how institutions should adapt to the age of AI include:

Other potential directions. The above categories are non-exhaustive. We would also like to receive proposals for other scientific research directions, such as physics, chemistry, or ecology, or proposals that direct other unmet needs in the research, development, and innovation ecosystem.

Strengthening Security

What tools, technologies, or institutions can we build to ensure rapid AI advances do not undermine national security or public safety?

AI technologies are dual-use. The same capabilities that automate cyberdefense can automate cyberattacks; and the tools that accelerate progress in the life sciences may also reduce the barriers to engineer biological weapons. Furthermore, the transformative potential of AI raises the stakes of geopolitical competition, and strengthens the ability of state and non-state actors to cause widespread and asymmetric harm. No one actor bears these risks, requiring targeted philanthropic and government action to ensure that defenses can scale alongside AI capabilities. We are particularly interested in achieving a world in which defensive technologies are structurally advantaged, such that attacks are quickly detected and contained. Below are non-exhaustive problem areas for which we are excited to receive submissions.

Cyber defense. The integration of AI into cyber and cyber-physical systems introduces a broad range of vulnerabilities that hinder AI adoption and increase the attack surface for critical infrastructure. Moreover, AI is already increasing the speed and scale of cyber attacks. By proactively investing in security and leveraging AI for defense, we can enhance our resilience. Potential projects in this space include:

Biological defense. Advances in AI and biotechnology are removing barriers to the development and design of biological weapons, which could cause millions of deaths and trillions in economic damage. How can we prevent the worst outcomes without slowing down beneficial research? Potential projects in this space include:

Verification and evaluation. AI challenges traditional technology-policy frameworks because it lacks many of the typical characteristics they address, like concrete physical forms, easily isolated components, and straightforward version control. To mitigate these problems, we need new methods for the verification of pertinent AI system characteristics (e.g., proving that certain data was used to train a model) and the measurement science of AI system capabilities and propensities (e.g., determining whether a benchmark accurately the risk-relevant properties it claims to). At the same time, these methods will not be effective if they leak or extract other critical information from the systems they are probing. Well-designed and privacy-preserving tools of this kind can enable a world in which governments can trust industry to manage this technology, and nations can credibly signal that their AI capabilities do not pose threats to international security. Potential projects in this space include:

Alignment and control. AI systems demonstrate sophisticated, unintended behaviors as well as the capacity to evade human oversight. As AI agents take on more and more consequential tasks and play a greater role in our personal lives, the risks from alignment and control failures increase. These dangers could compound significantly as coding agents become more integral to AI development. Potential projects in this space include:

Other potential directions. The former categories are not an exhaustive list of ideas we are interested in. Some other promising areas we would like to receive proposals for include:

Adapting Institutions

What tools, technologies, organizations, or policies are needed to help society adapt to rapid AI-driven change while preserving human agency, individual freedoms, and democratic institutions?

The development of advanced AI would alter the very foundations of social and economic life. Translating AI’s potential into widespread flourishing requires forward-looking institutions12 and infrastructure — technological, organizational, and governmental — that can establish shared facts, coordinate at scale, and make rapid, well-informed decisions. Yet most existing systems were not built for the speed and complexity that AI enables, and markets lack the incentives to update some of these systems for this new reality. Below are non-exhaustive problem areas for which we are excited to receive submissions.

Increasing state capacity. Governments are slow-moving institutions, but it is imperative for them to respond quickly and competently to rapid AI progress. In a world of rapidly advancing AI, the US government has a crucial role to play as an enforcer of law and order, provider of public goods, and the R&D lab of the world.

Epistemic integrity. AI dramatically lowers the cost of generating large volumes of apparently high-quality content, straining our ability to distinguish facts from fiction or propaganda. However, new infrastructure designed to establish ground truths, incorporate a variety of viewpoints in well-organized discussions, and analyze large amounts of data can create a more dynamic marketplace of ideas than ever before. We should be wary of interventions that give any one person, company, or interest group the power to adjudicate what is true and what isn’t — distributed solutions like Community Notes could instead provide less-brittle alternatives. Potential projects in this space include:

Coordination. The costs of coordination — identifying counterparties, becoming informed, negotiating priorities and agreements, and ensuring adherence to terms — mean that many mutually beneficial agreements between people in the world never actually get made. Likewise, the cost of coordination hinders many individuals’ ability to participate in the governance decisions that affect their lives. AI could greatly reduce the costs of coordination, enabling individuals to reach positive-sum outcomes and directly participate in governance at unprecedented scale.

Building resources to maintain human agency. In recent history, people have maintained economic and political power because they were needed as workers, taxpayers, soldiers, and voters whose cooperation institutions depended on. As advanced AI automates increasingly large parts of the economy, the risk goes beyond broad unemployment — it’s that as people lose economic leverage, their institutional leverage will suffer too. If institutions can function without broad human participation, they may become less responsive to human needs. Markets and governments will likely produce some tools for adaptation, but may do so unevenly or too slowly to keep pace with AI progress. We’re interested in projects that help people maintain economic relevance and institutional leverage even as advanced AI automates large parts of the workforce.

We believe this effort is critical, but we are unsure as to what the most promising proposals in this area may be. Proposals in this category should make an especially strong case for why markets or other institutions won’t provide the solution fast enough by default. Possible proposals in this area include concrete programs to help people rapidly adapt their skills; human-in-the-loop tooling to enable workers to efficiently supervise, direct, and collaborate with AI systems at machine speed; and benefits-sharing programs or policies to ensure the broad automation of labor benefits the general population.

Other potential directions. The above categories are not an exhaustive list of ideas we are interested in. Some other promising areas we would like to receive proposals for include:

Acknowledgements: Thank you to Gaurav Sett, Non-Resident Fellow at IFP, for closely consulting on this piece.

By “advanced AI” we mean highly autonomous systems that match or outperform humans at most cognitive tasks and economically valuable work. See “Ideas we’re interested in” below for more information.

More details under “Who should submit a pitch?”

AI could unlock treatments to the most debilitating human diseases. But some of these fundamental breakthroughs will lack clear commercial incentives or face other barriers. If AI greatly accelerates science, unaddressed bottlenecks will become especially acute, and proactively eliminating these bottlenecks will become especially important.

AI could unlock treatments to the most debilitating human diseases. But some of these fundamental breakthroughs will lack clear commercial incentives or face other barriers. If AI greatly accelerates science, unaddressed bottlenecks will become especially acute, and proactively eliminating these bottlenecks will become especially important.

We also submitted these proposals to the American Science Acceleration Project RFI, bound them into a beautiful book, and are sending copies to all 535 offices in Congress.

Submissions to the FDA for drug approval, which collectively form one of the most exhaustive repositories of real-world scientific practice and regulatory negotiation ever assembled, and thus a rich resource for AI to be trained on.

While the original Launch Sequence proposals were primarily aimed at the US government, we’re broadening our focus to include projects that can move forward just with philanthropic support. Given our new focus on providing shovel-ready ideas for funders, we will dedicate many more resources to vetting and refining project proposals than we did in the past.

Still, the US government can play a powerful role in implementing many proposals at scale, and we are excited to support proposals that require or benefit from government action.

This should be interpreted broadly, for example: a pitch for an organization to manufacture next-gen personal protective equipment (PPE) to increase society’s resilience against pandemics would be in scope. This is because future AI may democratize access to the knowledge and tools needed to create engineered viruses, thus increasing our baseline pandemic risk.

Examples with links to existing Launch Sequence project plans:

-

Cases where AI creates/worsens a problem (e.g., biosecurity, offensive cybersecurity, AI sleeper agents, securing AI model weights)

-

Cases where AI can be/support the solution (e.g., automating scientific replication, pathogen detection via metagenomics and ML, AI-powered code refactoring)

-

Cases where AI makes something newly feasible, which will not be done fast enough by default (connectome mapping, self-driving labs)

Note: You will be eligible for this bounty if: (1) we first learn about a particular project idea based on your pitch, and (2) you selected “I just want to submit an idea” in the application form, and (3) we then publish a full project plan based on your initial pitch.

We will ultimately determine whether we had already considered a project idea, or whether your pitch was the first time we encountered it. Only one person will be eligible for the “idea scout bounty” for every piece we publish.

If you're proposing a new research group or institution, we are excited to help accelerate the potential founder. If you're proposing a government program, we are excited to make the right connections and help the author make it happen.

We’ll aim to respond to initial pitches within a few weeks of submission, to allow time for us to investigate the area and consult with our advisors and domain experts.

“Institutions” in this RFP should be interpreted broadly, as “the humanly devised constraints that structure political, economic, and social interaction.”